Video Content Management System: Streamline Your Workflow

01/05/2023

1.09k

Table of Contents

There is more and more video content in the marketing material. Video is quickly becoming the primary method of consuming content online, from social networking platforms to e-learning websites. With the increase in video material, businesses have found managing, editing, and distributing these assets challenging.

A Video Content Management System (Video CMS) can help with this. In this post, we will define a video content management system, explain why you need one, and explore the essential factors to consider when selecting a Video CMS for your company.

What is a Video Content Management System?

A Video Content Management System is a consolidated platform for managing video assets in enterprises. It offers facilities for uploading, storing, editing, and distributing videos over different channels.

The system can keep all of your RAW and edited files. Because of the full video CMS capabilities, it can even organize video files by size, video type, categories, and other criteria.

In addition, Video CMS provides strong analytics and reporting solutions to assist organizations in tracking the performance of their video content.

Source: Nghe Content

A video content management system is crucial for enterprises that create large video material. It improves workflow by offering a centralized platform for managing all video assets. It eliminates the need for time-consuming and error-prone manual uploading and distribution of videos. These tasks are automated by the platform, saving businesses time and effort.

Why should you need a Video CMS?

There are several reasons why your business needs a Video CMS. Let’s discuss some key benefits of using a Video Content Management System.

Source: Nhật Nam Media

Easy Access to All Video Assets

Access to all video assets is one of the key advantages of using a Video CMS. A video content management system provides a consolidated platform for storing and organizing video assets.

It eliminates the need for different types of storage, allowing team members to access videos from any location. You may save time and effort by rapidly searching for and finding the movies you need with a Video CMS.

Cloud-based Editing Tools

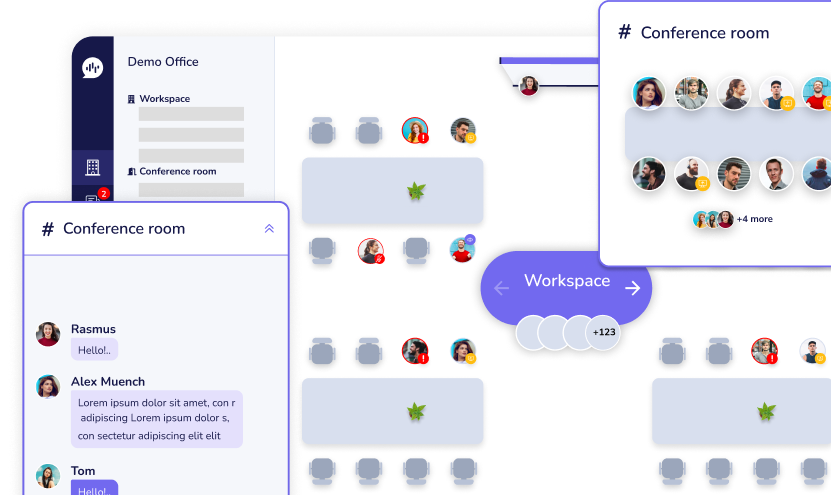

A video content management system provides cloud-based editing capabilities, allowing team members to collaborate on video projects from anywhere. Team members can collaborate on the same video project at the same time using cloud-based editing tools, eliminating the need to transmit data back and forth. This improves the efficiency and scalability of the editing process.

Real-time Collaboration Among Team Members

A video content management system enables team members to work on video creation projects in real time. It serves as a forum for providing feedback, annotating documents, and discussing improvements. This real-time connection is required for teams to work together, even if they are spread across the globe.

Furthermore, functionalities such as file distribution, social network publication, format conversion, and others can be used to build automated workflows. The usage of automated workflows improves the process of generating and delivering video.

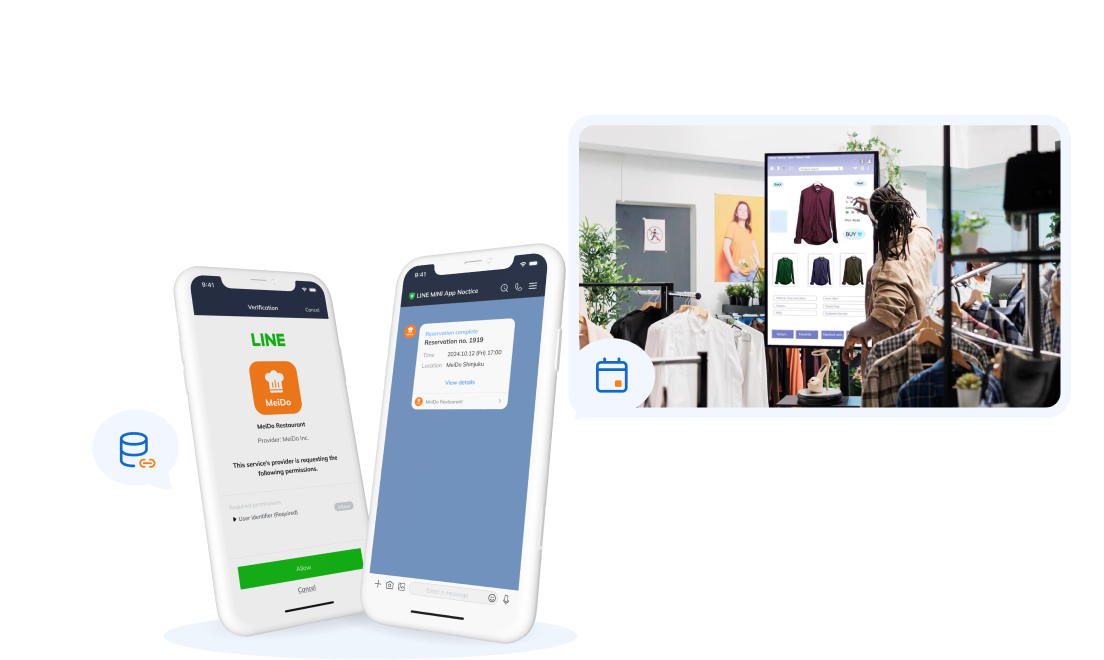

Multi-platform Distribution

You will have great possibilities for distributing videos across platforms if you choose a respected video content management system. Businesses may submit films to social media platforms, e-learning websites, and other channels with a few clicks. As a result, regardless of where their target audience consumes content, businesses are more likely to address them.

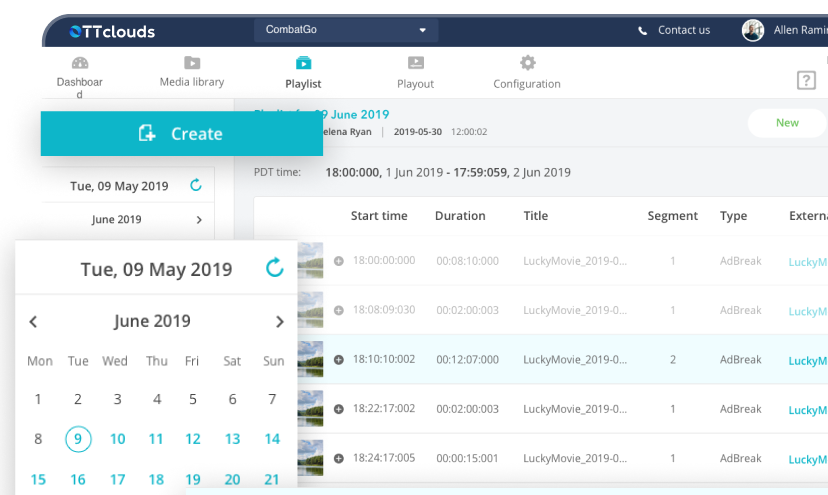

Advanced Analytics and Reporting

Monitoring your video metrics is the best way to determine how well your video strategy works. Using the analytics dashboard in the video CMS, you can see which videos are the most popular, how long visitors spend viewing each one on average, which videos performed well when viewers stop watching a video, and other information.

A video content management system has comprehensive analytics and reporting features that allow businesses to track the performance of their video content. Furthermore, this data can be used to optimize video content and boost its performance.

Key Considerations When Choosing a Video CMS for Your Business

When choosing a Video CMS for your business, there are several key considerations to keep in mind. Let’s discuss some of the most important factors to consider when using a video content management system.

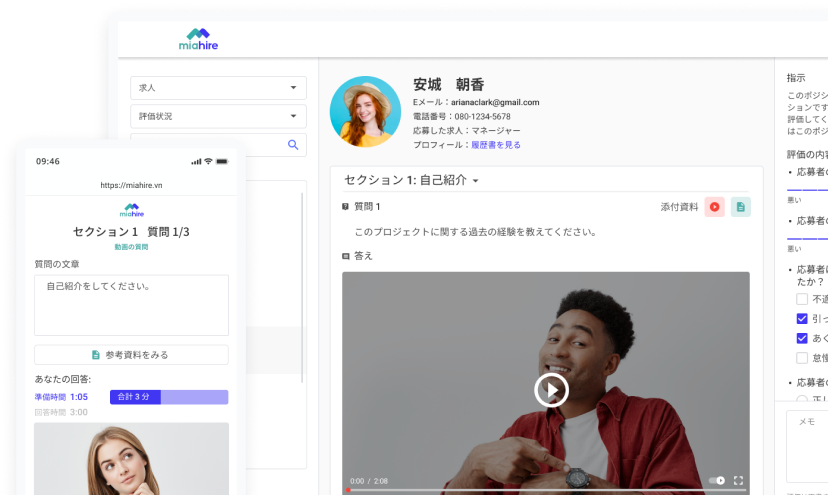

Source: Shopify

Supported Video Formats and Compatibility

One of the most important factors regarding video content management is the supported video formats and compatibility. A high-quality video content management system should handle a wide range of video formats, including popular ones like MP4, AVI, MOV, and WMV. This ensures that users can upload and distribute videos in the format that is most convenient for them.

Furthermore, the system must be compatible with many devices, browsers, and platforms, guaranteeing people can access and view videos regardless of their device or platform. Users should be able to watch videos without difficulties on desktops, laptops, tablets, or smartphones.

In short, to provide an outstanding user experience, a video content management system must emphasize support for various video formats as well as compatibility across devices and platforms.

Integration with Existing Software and Tools

It is critical to assess the Video CMS’s integration capabilities. The platform should be able to integrate with other corporate tools and systems, such as social media platforms, e-learning systems, and analytics tools. This integration simplifies the management and distribution of video content across different channels.

Ensure that the video CMS you choose can be readily integrated with significant IT infrastructure components, such as video conferencing systems like Webex, and team collaboration solutions like Microsoft Teams, SharePoint, One Drive, and so on. As a criterion, consider how an adaptive CMS will evolve to meet your changing business needs in the future.

Security and Privacy Features

Security should be a top priority when selecting a Video CMS. The platform should include strong security protections to secure your video assets from theft or unsafe access. Choose a system that includes encryption, authentication, and other security features to keep your video content safe.

You want to be sure that your video content is secure and that you have complete control over who may view it.

Source: HubSpot Blog

Partner with SupremeTech to find The Best Video CMS for your Business

A video content management system can help you optimize your video process, enhance your company’s productivity, and safeguard your video material. Consider the supported video formats, interconnection with existing software and tools, and security and privacy aspects when selecting a Video CMS for your company.

Partner with SupremeTech if you want your company’s best video content management solution. We offer a variety of Video CMS solutions that are suited to your requirements, including sophisticated capabilities such as automated video transcoding, personalized branding, and enterprise-level security. To learn more, please don’t hesitate to contact us now.

Related Blog