OTT Vs CTV: Unraveling the Best Choice for Your Business

21/05/2023

1.03k

Table of Contents

In the ever-changing landscape of digital marketing, businesses have numerous options for effectively reaching their target audience. Over-the-top (OTT) and connected TV (CTV) are the two fastest-growing platforms in the 20th century. As the battle between these two titans intensifies, one question looms large: which platform is the best choice for your business?

In this article, we’ll break down the key distinctions between OTT vs CTV services to aid companies in selecting the right choice for their specific requirements and goals.

Understanding of Both: What is OTT vs CTV?

OTT (Over-The-Top) and CTV (Connected TV) are two rising terms in the realm of television and video content. While they are interconnected, understanding the distinction between OTT and CTV is crucial for comprehending the modern viewing experience.

What is OTT (Over-the-top)?

Bypassing the traditional telecommunications, multichannel television, and broadcast television platforms, Over-the-top (OTT) refers to content providers that distribute streaming media as a standalone product directly to viewers over the Internet. To eliminate these established middlemen – as suggested by the prefix “over” in “over-the-top” – is the goal of OTT streaming platform.

In other words, over-the-top (OTT) TV services enable viewers to watch shows online rather than via traditional broadcast methods such as an aerial or satellite dish installed on the roof.

Some examples of OTT services include:

- Netflix

- Hulu

- Amazon Prime Video

- Disney+

- HBO Max

What is CTV (Connected TV)?

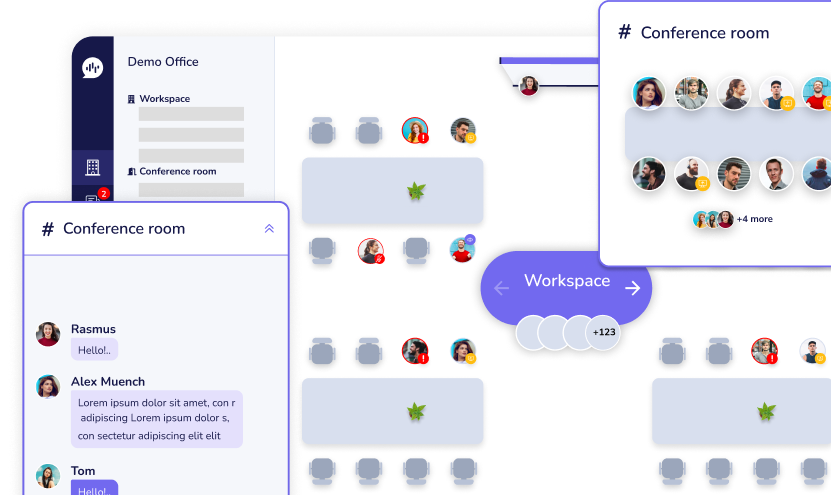

The all-encompassing phrase “connected TV” (or CTV) refers to TVs that may connect to the Internet to access content from sources beyond a cable or broadband provider. No cable or satellite subscription is required. Consequently, CTVs include OTT devices linked to conventional TVs, allowing the latter to connect to the Internet alongside smart TVs and IPTVs.

Most people access OTT services like Hulu and Netflix through CTV, which offers its own set of advantages for advertisers. So, CTVs and other common devices such as smartphones, tablets, and PCs may now access OTT content.

While this may sound confusing, the foremost thing to remember when comparing CTV vs OTT programming is that OTT material is seen through CTV.

Some examples of CTV are:

- Roku TV

- Amazon Fire TV

- Apple TV

- Samsung Smart TV

- Google Chromecast

- Android TV

The Growing Impact of OTT and CTV in the Business World

Hyper-personalized Experience

Although traditional linear TV still plays an indispensable role in reaching a considerable proportion of the population, OTT content shows outstanding advantages by customizing each viewer’s experience. Enterprises can effectively target specific demographics with OTT advertisements, such as classifying various aspects such as age, gender, location, financial capability, ethnicity, etc. Companies may narrow down their ideal demographic via OTT content rather than broadcasting to a large audience as they would with TV commercials.

More Data, Better Precision

Thanks to OTT advertising technologies, enterprises may target audiences based on age, gender, location, hobbies, and viewing habits. Meanwhile, linear TV only used broad data from Comscore and Nielsen, which had huge margins of error and only supported top-of-funnel advertising at best.

Eliminate the Intermediary

By deploying OTT campaigns internally with DSPs, companies have more say over where their ads appear. You may use programmatic technology to make rapid and cost-effective decisions about where and when your ads will display, down to the specific programs and audiences.

More Effective Measurement

In order to evaluate CTV in comparison to KPIs such as VCR, digital marketers are increasingly resorting to more complex methodologies. Brands can determine the efficacy of their media tactic by using more sophisticated CTV measurement capabilities, such as attribution against onsite page visits or actions and external Third-Party solutions like foot traffic or brand lift.

CTV Vs OTT: Comparing the Differences

If a company wants to reach its intended demographic via digital advertising, it must have a firm grasp of the distinctions between CTV and OTT. While both platforms offer unique opportunities to reach viewers in the digital streaming space, they have distinct characteristics that set them apart.

| Factors | OTT (Over-the-top) | CTV (Connected TV) |

|---|---|---|

| Audience reach and demographics | • Provides a vast audience reach with diverse demographics. • Appeals to viewers of all ages, interests, and geographic locations. • Accessible through various devices. | • Specifically targeting viewers who consume content on television or TV-connected devices. • Offers a more traditional TV viewing experience, appealing to households. |

| Pricing models | Offers flexible pricing models including: • Subscription-based: Pay a recurring fee for unlimited access to content (e.g., Netflix, Hulu). • Ad-supported: Access content for free but must view ads during playback (e.g., YouTube, Crackle). • Hybrid models: Combine subscription and ad-supported options. | • Primarily follows ad-supported models, allowing viewers to access content for free while watching ads. • Some provide ad-free watching for a monthly cost through a membership program. |

| Ad targeting capabilities | • Uses advanced ad targeting capabilities based on user data and behavior. • Enables advertisers to target specific demographics. • Leverages data collected from user accounts and interactions to deliver personalized and relevant ads. | • Provides similar ad targeting capabilities to OTT platforms. • Utilizes data collected from smart TVs and streaming devices. • Allows for targeted advertising to the appropriate people at the correct time. |

| User behavior and engagement | • Offers high user engagement through on-demand content consumption. • Users can freely choose their preferred watching option. • Provides interactive features, personalized recommendations, and additional content options. | • Provides a lean-back viewing experience similar to traditional TV. • Offers a more relaxed and passive content consumption experience. • Limited user control over content playback with essential functions handled by remote controls. |

Picking the Right Platform for Different Business Models

With the proliferation of CTV (Connected TV) and OTT (Over-The-Top) platforms, it’s becoming more important to grasp their differences in order to choose the one that best fits certain business models.

OTT for B2C Businesses

OTT platforms are generally more suitable for B2C (Business-to-Consumer) businesses.

White-label OTT platforms are focused on reaching customers where they already are – on their smart TVs, smartphones, and other streaming devices. B2C businesses can leverage OTT platforms to reach a larger audience, engage with consumers, and monetize their content through subscription or ad-supported models.

On the other hand, B2B (Business-to-Business) businesses typically have a narrower target audience and require communication channels that cater specifically to professionals and industry-related needs. B2B businesses often rely on industry-specific events, trade shows, professional networks, and direct business partnerships to reach and engage their target audience.

CTV for B2B Businesses

In contrast to OTT, Connected TV (CTV) provides a completely different aspect. CTV content is generally more suitable for B2C (Business-to-Consumer) businesses.

Let’s take a look at why CTV is mostly suited for B2B business:

- Exact targeting: Unlike the goal of B2C businesses using white-label OTT services, when reaching as many audiences are the top priority. CTV enables accurate targeting at the individual level by using third-party databases with information on firm size, industry, job type, intent, and other usual B2B media purchases. The data generated from CTV is said to be accurate and provides more valuable insights.

- Optimal Cost: Undoubtedly, CTV advertising has a higher CPM (Cost Per Mille) than other forms of advertising. However, you’ll see substantially lower rates when considering the exact targeting and the decreased waste.

Therefore, it seems that B2B businesses most benefit from CTV advertising because it can reach and engage customers in a targeted manner. This capability is well-suited for B2B businesses promoting consumer products, services, or brands.

Which Platform is Better for Startups, SMBs, and Large Corporations?

Small and medium-sized businesses (SMBs) frequently have limited resources and need inexpensive methods to contact their customers. OTT platforms provide a more affordable option in this regard, with many price tiers.

In contrast, large businesses may have the resources to investigate both OTT and CTV options. The extensive reach and sophisticated ad-targeting capabilities of OTT platforms are a boon for large firms with a business-to-consumer (B2C) emphasis. In contrast, CTV platforms may be more appropriate for B2B-emphasis organizations.

Embrace Change and Make The Right Choices with SupremeTech

Generally speaking, it is important to thoroughly consider your individual demands, target audience, and marketing goals while deciding OTT vs CTV for your organization. The benefits of OTT and CTV platforms are distinct, and they each serve a different kind of company.

As you navigate this decision-making process, consider engaging with a trusted technology partner like SupremeTech. As a product-driven development company with expertise in digital solutions and a commitment to exceptional results, we pride ourselves on providing valuable insights and support to help you deliver the best choice for your business.

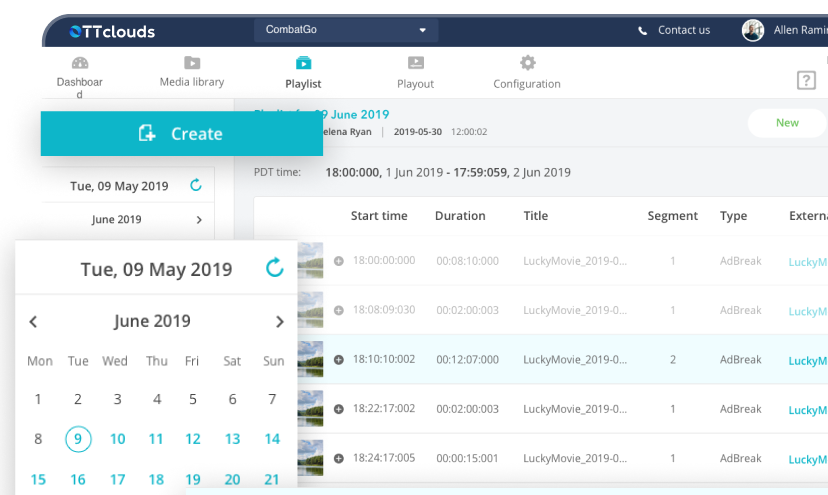

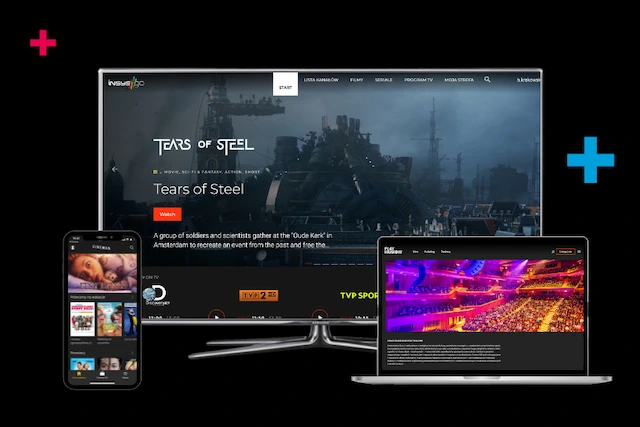

One solution you may want to explore is OTTclouds, an OTT streaming solution offered by SupremeTech. OTTclouds provides a comprehensive platform for streaming media content and can be a valuable asset in reaching your target audience effectively. Don’t hesitate to contact us for the earliest advice and support!

Related Blog