Maximizing ROI with Shopify Plus Checkout Extensibility: Key Insights and Case Studies

29/07/2023

1.83k

Discover how Shopify Plus Checkout Extensibility empowers e-commerce businesses to boost their Return on Investment (ROI). Uncover key insights and real-life case studies showcasing how customizing the checkout process leads to increased conversions, reduced cart abandonment, and enhanced customer engagement. Unlock the potential of Shopify’s powerful extensibility feature to maximize your store’s profitability and stay ahead in the competitive online marketplace.

Understanding Shopify Plus Checkout Extensibility

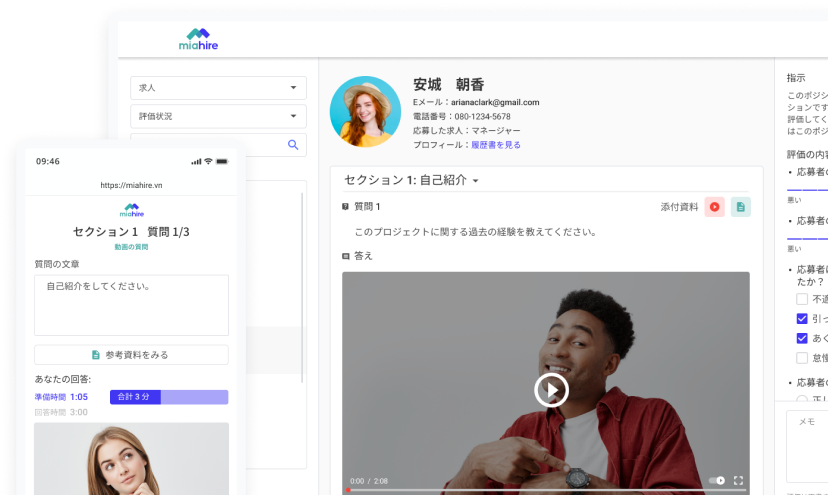

Shopify Plus Checkout Extensibility is a powerful feature that allows e-commerce businesses on the Shopify Plus platform to customize and tailor their checkout process. Unlike the standard Shopify checkout, which offers limited customization options, the extensibility feature empowers store owners to create a more personalized and seamless checkout experience for their customers.

What is Shopify Plus Checkout Extensibility?

At its core, Shopify Plus Checkout Extensibility provides access to the backend code of the checkout page. This means that businesses can now make custom modifications to the checkout process, enabling them to adapt it to their unique requirements. This level of control allows for the creation of a checkout process that aligns perfectly with the brand’s image and caters to the specific needs of their target audience.

By granting access to the checkout code, Shopify Plus Checkout Extensibility opens up a world of possibilities for store owners and developers. They can add or remove fields, implement custom logic, integrate with third-party services, and even introduce new payment methods. This unparalleled flexibility ensures that the checkout process becomes an integral part of the overall customer experience, enhancing satisfaction and reducing friction during the final stages of the buying journey.

Benefits of Shopify Plus Checkout Extensibility

The advantages of leveraging Shopify Plus Checkout Extensibility are numerous. Firstly, it allows businesses to create a seamless and frictionless checkout process. By streamlining the steps required to complete a purchase, customers are more likely to follow through, reducing cart abandonment rates and ultimately increasing conversions.

Secondly, the ability to customize the checkout process opens up opportunities for personalized marketing and promotions. Store owners can use customer data to display relevant upsells, cross-sells, or discounts during checkout, enticing customers to add more items to their carts and boosting the average order value.

Furthermore, Shopify Plus Checkout Extensibility enables integration with various third-party tools and services. Businesses can connect their checkout process to marketing automation platforms, customer relationship management (CRM) systems, or shipping carriers, streamlining operations and enhancing efficiency.

Customization Options for Checkout Process

Custom UI and content

With Shopify Plus Checkout Extensibility, the level of customization is only limited by the imagination. Store owners can design a completely branded checkout experience, ensuring a consistent look and feel that resonates with their target audience. Customizing the design and layout of the checkout page allows for improved usability and ease of navigation, reducing the likelihood of cart abandonment due to confusion or frustration.

Branding API

Beyond the aesthetics, Checkout Extensibility allows for the modification of the checkout’s look and feel, making it possible to introduce unique layouts, fonts, and colors that resonate with the brand’s identity.

Backend business logic

The ability to customize the backend business logic further enhances the checkout process by enabling personalized shipping rates, tax calculations, or discounts based on specific customer attributes or purchase history.

Post-purchase page

Additionally, businesses can leverage Checkout Extensibility to design a post-purchase page that extends the brand experience beyond the checkout itself. This page can include order confirmation details, personalized thank-you messages, and related product recommendations, nurturing customer engagement even after the transaction is complete.

Track buyer behavior

Furthermore, one of the most valuable functionalities of Checkout Extensibility is the capacity to track buyer behavior throughout the checkout process. By gathering and analyzing data on customer interactions, businesses can gain invaluable insights into pain points, drop-off rates, and conversion patterns. Armed with this data, they can make informed decisions to optimize the checkout flow and increase overall conversions, ultimately maximizing their return on investment.

How Checkout Extensibility Helps Maximize ROI

Checkout Extensibility on the Shopify Plus platform offers e-commerce businesses a powerful toolset to optimize their Return on Investment (ROI) by enhancing the checkout experience. Let’s explore how this feature contributes to higher conversion rates, reduced cart abandonment, increased customer retention, and the effective use of analytics and data insights.

Impact on Conversion Rates and Sales

A smooth and frictionless checkout process is vital for converting visitors into paying customers. Checkout Extensibility allows businesses to streamline the checkout flow, making it easier for customers to complete their purchases. By reducing the number of steps, simplifying form fields, and integrating trusted payment gateways, the likelihood of cart abandonment decreases, resulting in higher conversion rates.

Moreover, businesses can implement personalized upsells, cross-sells, and discount offers during the checkout process. This targeted approach capitalizes on the buyer’s intent and can lead to higher average order values, further maximizing revenue and ROI.

Reducing Cart Abandonment and Increasing Customer Retention

Cart abandonment is a common challenge for online retailers. However, Checkout Extensibility equips businesses with the means to address this issue proactively. By offering multiple payment options, clear shipping information, and a transparent returns policy, businesses can instill trust and confidence in customers, reducing the likelihood of abandonment.

Additionally, the option to save customer information for future purchases simplifies the checkout process for returning customers. This convenience encourages customer loyalty and repeat business, boosting customer retention and contributing to long-term ROI growth.

Leveraging Analytics and Data Insights for Better ROI

The true potential of Checkout Extensibility lies in its ability to collect valuable data and analytics on customer behavior during the checkout process. By integrating with analytics tools, businesses can track various metrics, including drop-off rates, abandoned carts, and conversion funnels.

These data insights provide a comprehensive understanding of customer behavior and pain points during checkout, enabling businesses to make data-driven decisions to optimize the process further. By identifying and addressing areas of improvement, businesses can refine the checkout experience, reducing barriers to purchase and ultimately increasing conversions.

Additionally, analyzing customer data can lead to insights on preferred payment methods, popular products, and peak purchasing times. Armed with this knowledge, businesses can fine-tune their marketing strategies, optimize product offerings, and implement targeted promotions to maximize ROI.

Case studies of Shopify Plus Checkout Extensibility

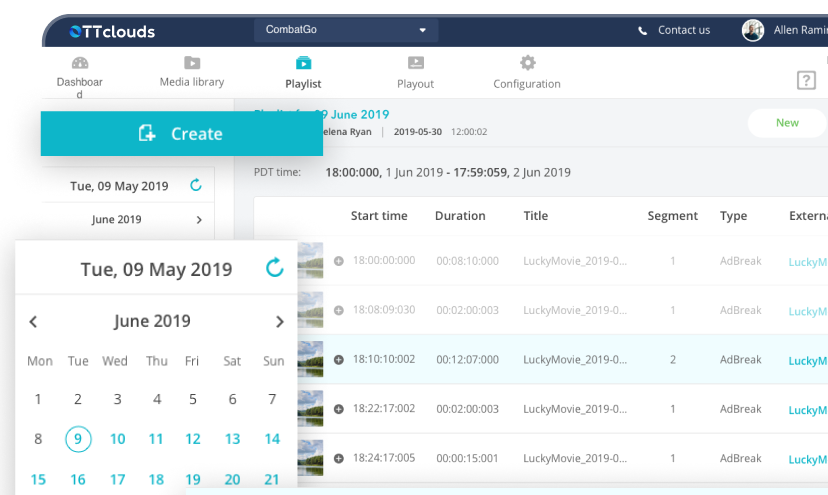

FastTech Electronics – Doubling Conversions with Checkout Extensibility

By customizing their checkout process, FastTech Electronics aimed to reduce cart abandonment and enhance the overall customer experience. The results were remarkable, with the company managing to double their conversion rates within just a few months.

Upon adopting Checkout Extensibility, FastTech Electronics revamped their checkout page to align with their brand’s identity. They optimized the layout, making it more intuitive and visually appealing. By streamlining the checkout flow and removing unnecessary steps, customers found it easier to complete their purchases, resulting in a substantial decrease in cart abandonment.

Moreover, FastTech Electronics leveraged the customization options provided by Checkout Extensibility to introduce personalized upsell and cross-sell offers based on individual customer preferences and purchase history. This targeted approach led to increased average order values and contributed significantly to the improved conversion rates.

StyleHub Clothing – Personalization and its Effect on Customer Engagement

In our third case study, we explore the journey of StyleHub Clothing, a fashion retailer that leveraged Checkout Extensibility to focus on personalized customer engagement during the checkout process.

With Checkout Extensibility, StyleHub Clothing integrated personalized product recommendations based on the shopper’s browsing history, previous purchases, and preferred styles. By displaying relevant suggestions during checkout, the company experienced an increase in cross-selling and up-selling opportunities, contributing to higher average order values and improved revenue.

Moreover, StyleHub Clothing utilized Checkout Extensibility to offer a seamless loyalty program integration during checkout, rewarding loyal customers with exclusive discounts and incentives. This personalized approach fostered a stronger emotional connection with customers, resulting in improved brand loyalty and repeat purchases, ultimately enhancing their overall customer engagement and driving long-term success.

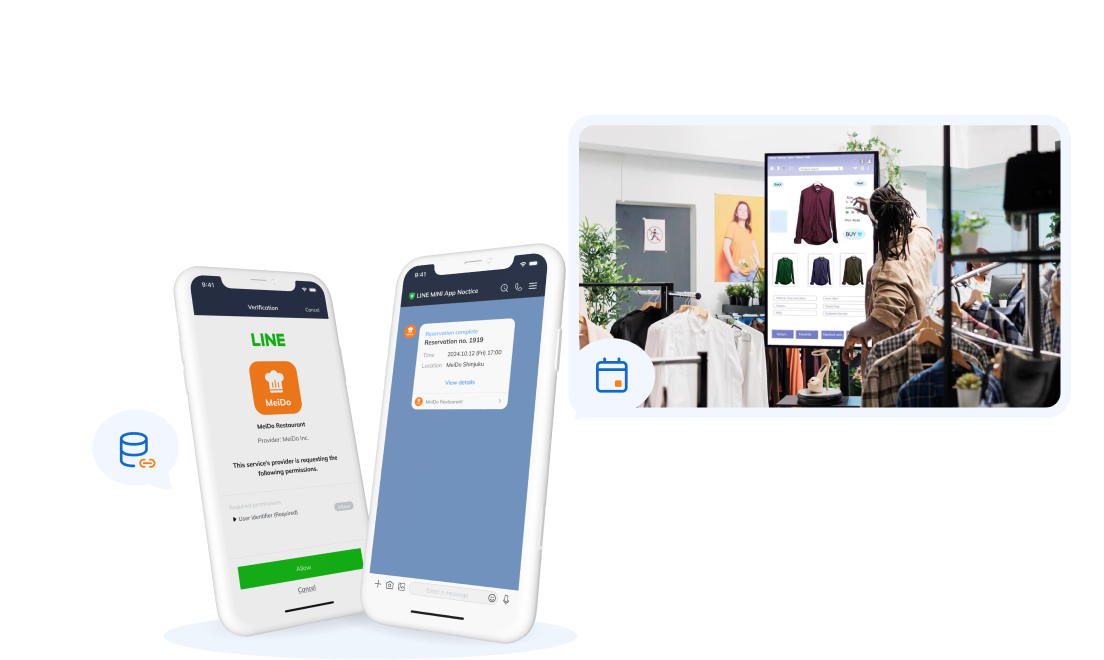

Get your Shopify Plus custom development solutions from SupremeTech!

SupremeTech offers comprehensive solutions to migrate your current website to Shopify. We have the expertise to build a tailored software which streamlines your e-commerce management and enhance customer experience.

Contact us now to take a step forward and discover how you can make your unique business idea come true with our technical solutions.

Reference: Building Powerful Checkout Customizations with Checkout Extensibility – By Martin Laws – Development Manager at Shopify