Best Practice for Hire Dedicated Remote Development Team

04/07/2023

776

Table of Contents

In today’s fast-paced business landscape, companies are constantly seeking innovative strategies to stay ahead of the competition. One such strategy that has gained immense popularity is the hired dedicated team model.

However, this process requires businesses to carefully prepare hiring strategies and make ongoing evaluations. In this comprehensive guide, we will give you step-by-step in the entire journey. Let’s dive in!

What is a Dedicated Team?

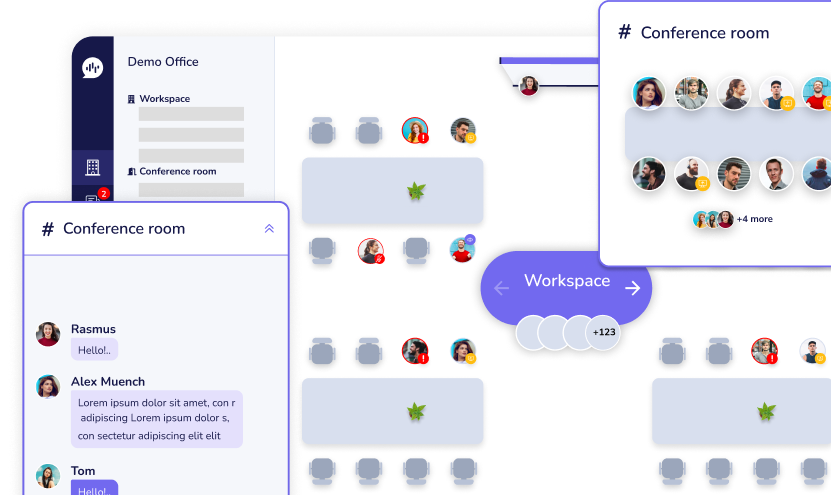

In outsourcing, a dedicated team refers to a group of professionals who are exclusively allocated to work on a specific project or task for a client.

The hired dedicated team model refers to the practice of partnering with a remote team of professionals who are solely dedicated to working on your projects or business functions. Unlike traditional outsourcing models, where tasks are assigned to different individuals or teams, the dedicated team model provides a cohesive and integrated approach. This team becomes an extension of your organization, working closely with your in-house teams to achieve shared goals and objectives.

Benefits Of Hiring A Dedicated Remote Development Team

Source: Rootstack

Outsourcing a dedicated remote development team can offer numerous benefits for businesses. Here are some key advantages:

- Access to diverse teams from around the world

- Cost savings by avoiding expenses associated with full-time employees

- Flexibility to scale the team based on project needs

- Faster time to market with a focused team

- Specialized skills and expertise in specific technologies or domains

- Focus on core competencies by delegating non-core tasks

- Improved productivity and efficiency through effective collaboration

- Reduced risk and enhanced security measures

By partnering with a dedicated remote development team, such as SupremeTech’s dedicated team model, you can leverage our experience, expertise, and proven processes. This ensures efficient project management and delivery, ultimately benefiting your business in terms of quality, innovation, and customer satisfaction.

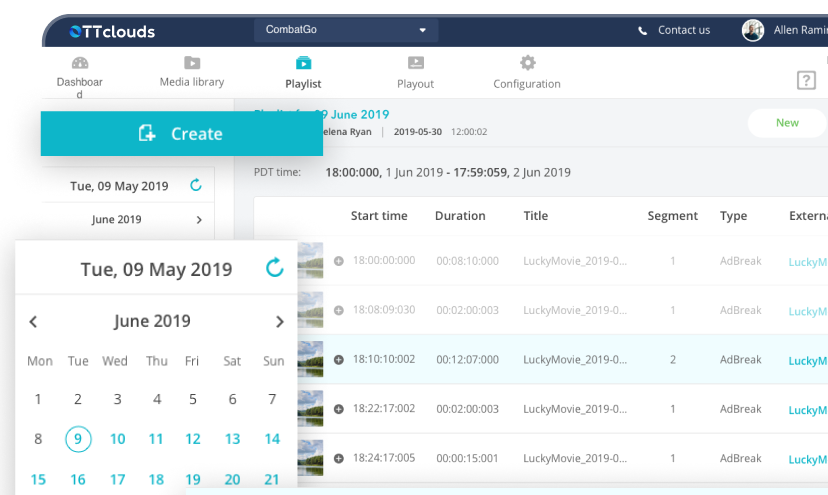

What Should You Prepare Before Hiring A Dedicated Team?

Source: Emizentech

Before deciding to hire dedicated remote development team, it is important to prepare and consider several factors to ensure a successful collaboration. Here are some key aspects to focus on:

1. Define project requirements: Clearly outline your project goals, scope, and specific technical requirements. This will help you identify the skills and expertise you need from the remote team.

2. Research and shortlist candidates: Conduct thorough research to find potential remote development teams. Look for teams with relevant experience, positive client feedback, and a strong track record in your industry.

3. Set clear expectations: Clearly communicate your expectations regarding project deliverables, timelines, quality standards, and reporting procedures. This will help align the remote team’s work with your business objectives.

4. Encourage knowledge sharing: Encourage knowledge sharing between your in-house teams and the dedicated team. Foster a culture of collaboration and open communication, where insights and ideas can flow freely, leading to innovation and continuous improvement.

5. Establish a budget: Determine a realistic budget for outsourcing your dedicated team. Consider factors such as the complexity of the project, the duration of engagement, and the skills required. Having a clear budget in mind will help you evaluate potential partners and negotiate contracts effectively.

⇒ Maybe you’ll want to read: How to Do a Cost Estimate for a Project?

How to Hire a Dedicated Remote Development Team?

Source: Emizentech

To hire the right dedicated remote development team, consider the following work:

- Assess communication and collaboration skills

- Conduct interviews and technical assessments

- Check client reviews and references

- Evaluate their project management approach

- Review contracts and legal aspects

- Start with a small project or trial period

1. Assess Communication and Collaboration Skills

Effective communication is crucial in remote work environments. Evaluate the team’s communication skills, responsiveness, and ability to collaborate effectively using remote communication tools.

2. Conduct Interviews and Technical Assessments

Interviews or technical assessments to evaluate the team’s skills and problem-solving abilities. Ask relevant technical questions and assess their problem-solving approach.

3. Check Client Reviews and References

Read client reviews and testimonials to gain insights into the team’s performance, reliability, and professionalism. If possible, reach out to their previous clients for references.

4. Evaluate Their Project Management Approach

Inquire about their project management methodology and tools. Look for teams that have a well-defined process, clear communication channels, and a proactive approach to project management.

5. Review Contracts and Legal Aspects

Ensure that the team operates within legal frameworks and has proper contracts or agreements in place. Clarify aspects such as intellectual property rights and confidentiality.

6. Start with a Small Project or Trial Period

Consider starting with a small project or a trial period to assess the team’s performance, communication, and ability to meet deadlines. This allows you to gauge their capabilities before committing to a long-term engagement.

⇒ Maybe you’ll be interested in: How to Plan a Software Project?

How to Evaluate the Success of Your Remote Development Team?

Source: Robotics & Automation News

The process does not finish when you manage to hire a dedicated remote development team. You will need to assess how their work is toward your company. To evaluate the success of your remote development team, consider the following strategies and practices:

- Track Adherence to Timelines

- Assess Deliverable Quality

- Seek Feedback from Stakeholders

- Celebrate Achievements

1. Track Adherence to Timelines

Monitor the team’s ability to meet project deadlines and adhere to agreed-upon timelines. Evaluate whether they are delivering work on schedule and identify any instances of delays or missed milestones. Timely delivery is a critical aspect of success in outsourced projects.

2. Assess Deliverable Quality

Evaluate the quality of the work delivered by the dedicated team. Review whether it meets the agreed-upon standards, aligns with your expectations, and fulfills the project requirements. Assessing deliverable quality helps determine the team’s performance and the value they bring to your business.

3. Seek Feedback from Stakeholders

Gather feedback from relevant stakeholders, such as project managers, team members, or clients who interact with the dedicated team. Their insights provide valuable perspectives on the team’s performance and help identify areas for improvement.

4. Celebrate Achievements

Acknowledge and celebrate the achievements and successes of your outsourced dedicated team. Recognize their contributions, express gratitude, and reinforce a positive working relationship. Celebrating milestones motivates the team and strengthens their commitment to delivering exceptional results.

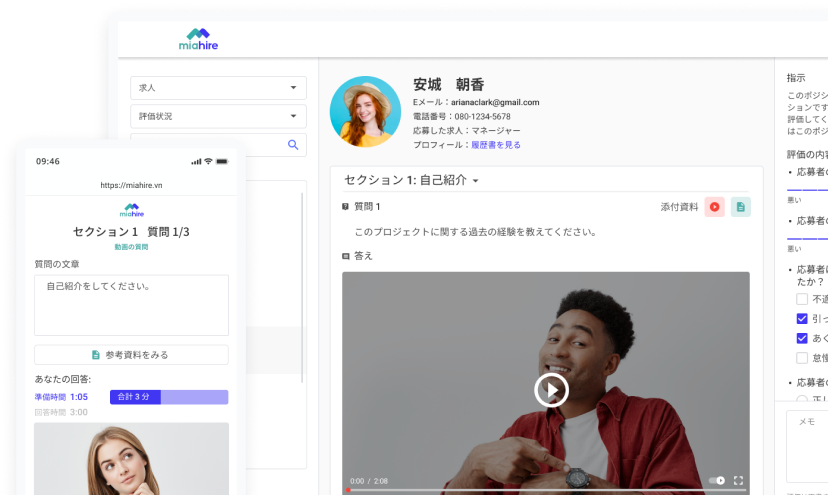

Is a Dedicated Remote Development Team Right for Your Business?

Source: Keene Systems

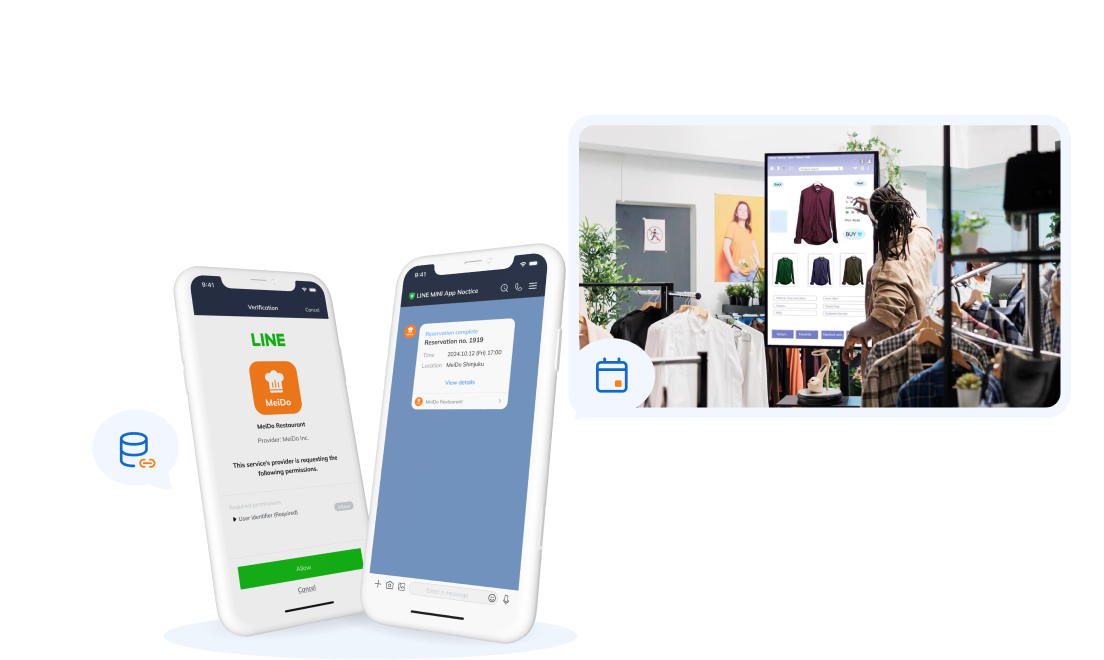

A dedicated remote development team can be a valuable asset for your business, providing various benefits and flexibility. They can offer the expertise and resources needed to drive your projects forward, enhance productivity, and achieve success in today’s dynamic business landscape.

By partnering with a reputable company like SupremeTech and utilizing our dedicated project team model, you can access a highly skilled and experienced development team.

SupremeTech’s dedicated team model offers a tailored approach to suit your business needs. Our team of professionals can efficiently handle both website and mobile application development projects, ensuring high-quality deliverables and meeting project milestones. With expertise in project management and effective communication practices, we can seamlessly integrate with your existing workflows. Contact us today to hire a dedicated remote development team for your future projects!

Related Blog