Prioritizing Digital Employee Experience in Your Business Strategy

06/04/2023

1.27k

Table of Contents

As more businesses embrace a long-term hybrid work model, the digital employee experience is gaining traction. The reality of a workforce that can work from anywhere has elevated DEX to a critical business priority. As a result, if you want to create a productive, profitable, and stress-free work environment, you must pay special attention to the digital employee experience.

What is Digital Employee Experience?

As the name implies, digital employee experience (DEX) is a reflection of how effectively people interact with their workplace digital tools, allowing them to be engaged, proficient, and productive.

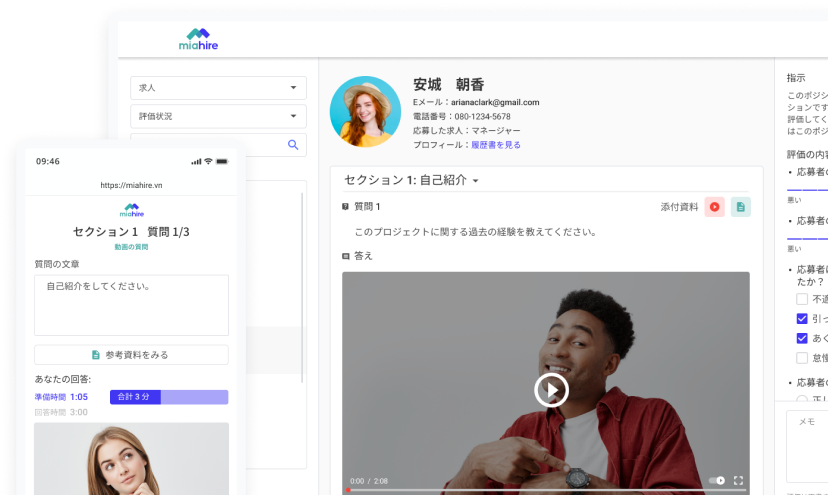

There are probably a lot of examples that come to mind when you reflect on employee daily interactions with technology. The digital experience begins to emerge as soon as new employees join the company. This covers touchpoints during the hiring, onboarding, training, and even offboarding phases of hiring new employees.

Companies around the world can now work more efficiently at home and in remote locations thanks to digital tools. People nowadays expect flexible and remote work options while still feeling connected and valued. According to Sift, digital employee experience is becoming increasingly important in the post-COVID workplace, as well as in shaping the overall employee experience. A strong DEX is required to create the best working environment for employees.

Five Factors of Digital Employee Experience

The digital employee experience includes quality, usability, and dependability. However, it also includes inclusiveness, accessibility, and other aspects of the user experience. Let’s look into particular factors of the digital employee experience:

- Performance of devices and programs: How is the data load speed and its configuration? Does it fully integrate features to support employees’ work? Does it cause crashes when performing a large amount of work or for an extended period of time?

- Reliability of devices and programs: Do the apps pose a data security risk or a privacy threat?

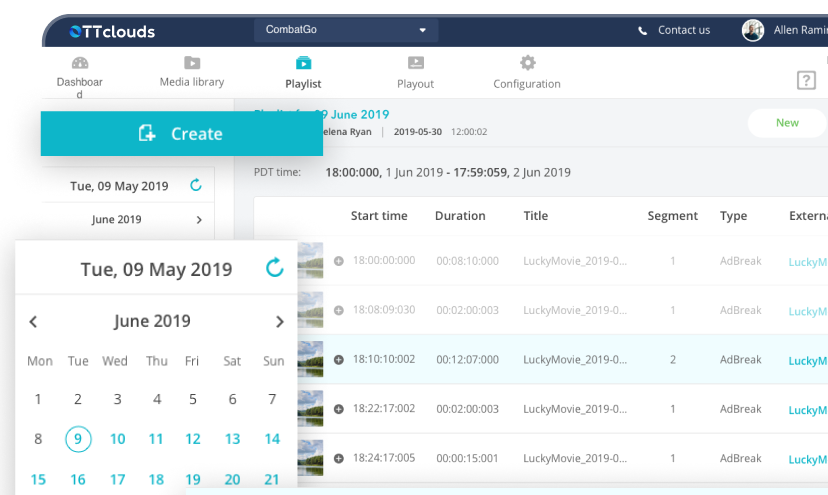

- Mobility: Do employees have the tools necessary to perform their jobs well from any location? Is it possible to log into accounts and sync data across multiple devices?

- Collaboration: Do programs give employees the tools they need to collaborate with their teammates? Do they increase productivity? Do they help a company succeed?

- Convenience: Are the processes of installing software, logging in, and working on the platform quick and simple? Is the program’s interface simple to use? How long does it take to become acquainted with the app’s features?

In addition to the above factors, the way the IT department collaborates with the business and with specific employees to address problems and service requests, deploy new technologies, train staff, and collaborate with staff to comprehend and fulfil requirements is all part of the digital employee experience.

Benefits of a Positive Digital Employee Experience

According to a joint study by Microsoft and Qualtrics, employees were found to be 121% more employees who had a positive digital workplace experience were more likely to feel appreciated by their organization. A good digital employee experience can be beneficial in a number of ways, such as:

Improved Employee Satisfaction and Retention

If you can provide your team with the tools they need to make their jobs simpler, it will almost certainly lead to happier and more engaged workers.

Excellent technology can liberate workers to work how it best suits them. It can promote a healthy work-life balance and even make it easier for employees to access the tools they need to complete several tasks quickly. This results in higher retention, wherever they work, whether remotely, hybrid, or in the office.

Increased Productivity and Efficiency

Giving employees digital tools that are simple to use and reliable will increase output. However, many employees encounter slow, cumbersome internal systems and dated hardware on a daily basis, especially for hybrid or remote workers.

Employee productivity will be directly impacted by supporting IT so making sure they are prepared to handle issues with digital friction, such as network and device problems. With fewer trouble issues, better automation, and quicker remediation, less time will be spent on device maintenance and more time will be available for work.

Enhanced Collaboration and Teamwork

Your users can participate in virtual communities if your digital workplace places a strong emphasis on communication and collaboration. These eliminate the geographic restrictions that globalized workforces must deal with. This can result in faster problem solving, improved knowledge sharing, and higher output from all project participants.

Positive Impact on Company Culture

The workplace is no longer simply a place where people clock in and out and get paid. Work is now a far richer experience than it has ever been. People feel more empowered, in control, and capable of shaping their position in the workplace as a result of integrated technology.

How to Create a Positive Digital Employee Experience?

Here are a few things to keep in mind if you want to improve your business DEX:

Make it Easy and Accessible

Make digital tools simple to use, accessible, and intuitive for all employees. This could include ensuring that the tools are device-compatible, optimizing response times, and providing clear instructions.

Customize Your DEX

People interact with technology in a variety of ways. It is necessary to customize digital tools and experiences to their needs and preferences. Allow employees to select their preferred communication channels, for example, and set up support systems based on individual preferences. You could also provide personalized learning experiences, such as customized modules for new employees or videos of company processes.

Measure The DEX

More businesses are utilizing digital DEX management tools to aid in continuous improvement. DEX management tools collect and analyze usage and performance data. The information and trends are then analyzed to gain insights to help guide strategy and improvement.

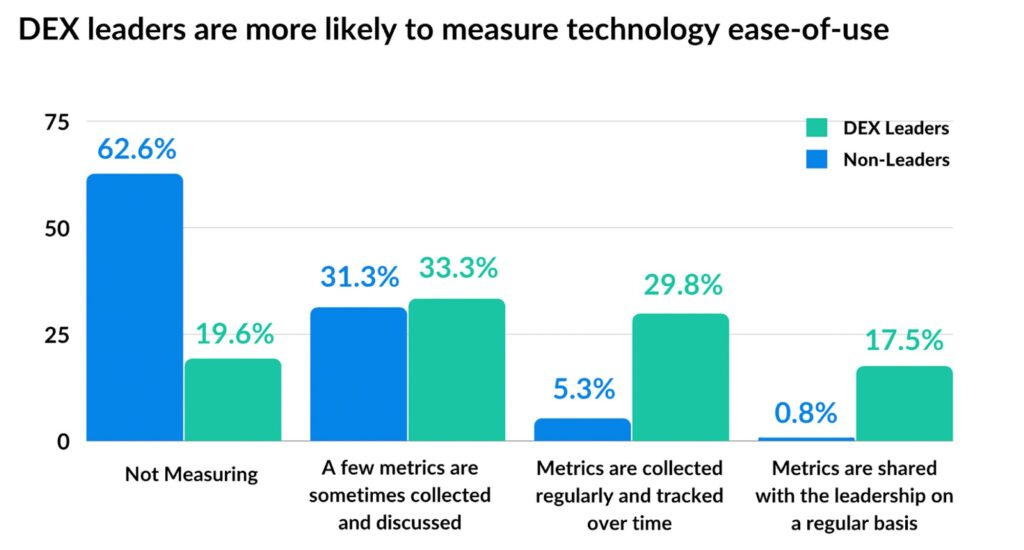

According to Origami, companies that are DEX Leaders are more likely to track their technology’s ease-of-use and take it as an important factor to improve employee experience. Beside that, other aspects of the user experience, such as configuration, performance, and reliability, should also be considered.

Enhance Collaboration

When various departments work together to enhance the digital employee experience, you can approach the process from a broad perspective. This enables management to create a digital employee experience that empowers all employees and optimizes workflows for teams across the organization.

Improve Training and Support

Employees should receive ongoing training and support to help them use digital tools effectively. You could also provide resources such as help documents, instructional videos, and frequently asked questions. This guarantees that employees have the resources they need to make the most of their interactions with digital tools.

Conclusion

By funding DEX, businesses can increase productivity, profitability, and employee satisfaction. It can be difficult to prioritize the digital employee experience and put favorable improvements into practice. Through improved digital adoption solutions, SupremeTech will assist you in achieving your goals for the digital employee experience. Give us a visit with our excellent products, which might be your best choice to improve the digital employee experience for greater success overall.

Reference

Jackson, S. (no date) How HR and IT power teams can build digital work with heart, Four dots in a downwards triangle pattern next to Sift. Available at: https://www.justsift.com/blog-posts/how-hr-and-it-power-teams-can-build-digital-work-with-heart (Accessed: 15 October 2024).

Related Blog